|

|

- Search

| Genomics Inform > Volume 20(1); 2022 > Article |

|

Abstract

Despite the success of recent genome-wide association studies investigating longitudinal traits, a large fraction of overall heritability remains unexplained. This suggests that some of the missing heritability may be accounted for by gene-gene and gene-time/environment interactions. In this paper, we develop a Bayesian variable selection method for longitudinal genetic data based on mixed models. The method jointly models the main effects and interactions of all candidate genetic variants and non-genetic factors and has higher statistical power than previous approaches. To account for the within-subject dependence structure, we propose a grid-based approach that models only one fixed-dimensional covariance matrix, which is thus applicable to data where subjects have different numbers of time points. We provide the theoretical basis of our Bayesian method and then illustrate its performance using data from the 1000 Genome Project with various simulation settings. Several simulation studies show that our multivariate method increases the statistical power compared to the corresponding univariate method and can detect gene-time/environment interactions well. We further evaluate our method with different numbers of individuals, variants, and causal variants, as well as different trait-heritability, and conclude that our method performs reasonably well with various simulation settings.

In recent years, genome-wide association studies (GWAS) for longitudinal traits (e.g., body weight or cholesterol levels) have been carried out in cohorts, where multiple measurements have been collected from each individual [1-7]. Although GWAS have successfully discovered a large number of novel genetic variants associated with these traits, the identified variants typically account for only a small proportion of overall heritability [8-10]. A presumed explanation for the ŌĆ£missing heritabilityŌĆØ is that existing methods have low power to identify gene-gene and gene-time/environment interactions [11]. Since traditional methodologies are limited to the identification of variants with marginal effects using a single measurement per individual, a large amount of useful information in longitudinal data is lost and variants that interact with other variants or have time-varying effects may not be detected [12]. It is more appropriate to analyze multiple variants simultaneously, using all available measurements, for longitudinal genetic studies.

There are methodological challenges associated with the genetic analysis of longitudinal traits for multiple variants. Most complex traits are typically controlled by multiple variants that interact with each other or environmental factors. It may be exceedingly difficult to model all candidate variants with epistasis effect and gene-time/environment interactions for longitudinal traits because genetic data are generally high-dimensional relative to the number of samples. Bayesian multiple quantitative trait loci (QTL) mapping methods [13-16] have been proposed for modeling epistatic effects. Multiple QTL can be simultaneously detected by treating the number of QTL as a random variable using the reversible jump Markov-chain Monte Carlo (MCMC) method [13,14]. Alternatively, multiple QTL can be viewed as a variable selection problem [15,16]. Bayesian model selection approaches are used for identifying QTL with main and epistatic effects [17], as well as QTL that interact with other covariates [18] based on the composite model space framework. These approaches use a fixed-dimensional parameter space by setting an upper bound on the number of detectable QTL and introduce latent binary variables for deciding which variables will be included in the model. This technique reasonably reduces the model space using efficient MCMC algorithms. For multiple QTL mapping with multivariate traits, Banerjee et al. [19] extended the Bayesian variable selection method of Yi [16] via a model that allows different genetic models for different traits. This method provides a multiple QTL mapping strategy for correlated traits, but it does not account for the dependence structure among repeated measurements from each subject.

Several statistical methods have been proposed for dealing with within-subject variation. For data collected at the same time points across all individuals, the measured values at each time point can be treated as one variable. The data can then be treated as multivariate outcomes and jointly analyzed [20-23]. For data collected at different time points across some or all individuals, the measured values cannot be effectively grouped; thus, standard multivariate analysis is no longer applicable. Alternatively, mixed models are used for longitudinal data to map QTL [24]. Mixed models are flexible in modeling such unbalanced data because they allow non-constant correlations among observations. Chung and Zou [25] developed a Bayesian multiple association-mapping algorithm based on a mixed model with a built-in variable selection feature. It models multiple genes simultaneously and allows gene-gene and gene-time/environment interactions for repeatedly measured phenotypes. However, in that model, we made the strong assumption that the covariance matrix is known up to a constant. We plan to relax that assumption here.

In this paper, we develop a Bayesian variable selection method for longitudinal data where phenotypes are not measured at a fixed set of time points for all samples. It jointly models the main and pairwise interactions of all candidate genetic variants. We propose a novel grid-based approach to parsimoniously model each subject's covariance matrix as a function of a covariance matrix defined on a set of pre-selected time points where each observed time point is mapped to its two adjacent grid time points via linear interpolation. This approach thus deals only with a covariance matrix of a fixed dimension. The covariance matrix is then modeled nonparametrically using the modified Cholesky decomposition of Chen and Dunson [26], which facilitates the use of normal conjugate priors. The deviance information criterion (DIC) and the Bayesian predictive information criterion (BPIC) are proposed for the selection of an optimal number of grid points. The paper is organized as follows. In the Methods section, we introduce a novel grid-based Bayesian method for longitudinal genetic data and provide its theoretical basis. In the Results section, we show numerous simulation results using whole-genome sequencing data from the 1000 Genome Project to evaluate the performance of the proposed methods and assess the effects of sample size, number of variants, causal variants, and heritability. We conclude the paper with some discussions on the proposed methods and future research.

For our simulation studies, we utilized the whole-genome sequencing data from the 1000 Genome Project, which created a catalogue of common human variations using samples from people who provided open consent who declared themselves healthy. It ran between 2008 and 2015, generating a large public catalogue of human variations and genotype data. We randomly selected 400 out of 504 individuals of East Asian (EAS) ancestry from the 1000 Genome Project data (phase 3 version 5) and then removed single-nucleotide polymorphisms (SNPs) with a minor allele frequency <5% and p(Hardy-Weinberg equilibrium) <10-6, which resulted in 6, 247, 288 SNPs.

For a given trait, suppose we have n individuals where individual i has phenotypes measured at ni time points (i=1, ..., n) and p SNPs. Let N = Ōłæ i = 1 n n i p ( p - 1 ) 2 d = p + p ( p - 1 ) 2 + p q n i ├Ś p ( p - 1 ) 2

Given ╬│, ╬╗, and xi, we consider the following mixed model:

where yi=(yi1, ..., yini)T is an ni├Ś1 phenotype vector of individual i; ╬╝i=╬╝1ni is an ni├Ś1 overall mean vector; ╬ō is a diagonal matrix with upper diagonal elements 1q (i.e., the model always contains all non-genetic covariates) and lower diagonal elements ╬│ ; ╬▓ = ( ╬▓ t T , ┬Ā ╬▓ g T , ┬Ā ╬▓ g g T , ┬Ā ╬▓ g t T ) T p i = ( p i 1 T , ┬Ā . . . , ┬Ā p i n i T ) T p i j = ( 0 ( r - 1 ) T , ┬Ā t r + 1 - t t r + 1 - t r , ┬Ā t - t r t r + 1 - t r , ┬Ā 0 ( k - r - 1 ) T ) p i j = ( 0 ( r - 1 ) T , 1 , ┬Ā 0 ( k - r ) T ) Ōłæ r = 1 k ┬Ā a i j r = 1 , ┬Ā 0 Ōēż a i j 1 , . . . , a i j k Ōēż 1

For Bayesian estimation of the mixed model (1), we factor D, the covariance matrix of the random effects, by employing the modified Cholesky decomposition of Chen and Dunson [26]. Let L denote a k├Śk lower triangular Cholesky decomposition matrix that has nonnegative diagonal elements, such that D = LLT. Let L=╬ö╬©, where ╬ö=diag(╬┤1, ..., ╬┤k) and ╬© is a k├Śk matrix with the (l, m)th element denoted by Žłlm. To make ╬ö and ╬© identifiable, we make the following assumptions: ╬┤lŌēź 0, Žłll=1 and Žłlm=0, for l=1, ..., k; m=l+1, ..., k. These conditions make ╬ö a nonnegative k├Ś k diagonal matrix and ╬© a lower triangular matrix with 1's in the diagonal elements. This leads to the decomposition D =╬ö╬©╬©T╬ö, and thus we reparametrize model (1) as

where bi=(bi1, ...bik)T such that bijŌł╝N(0, 1) and b i j ŌŖź b i j ' ┬Ā ( j ŌēĀ j ' )

Model identifiability is a property that a model must satisfy for accurate inference to be possible. A model is identifiable if it is theoretically possible to estimate the true values of the underlying parameters of the model, while a model is non-identifiable or unidentifiable if two or more parametrizations are observationally equivalent [27]. The proposed Bayesian model has an identifiability issue associated with the covariance matrix of y = ( y 1 T , ┬Ā . . . , ┬Ā y n T ) T P D P T + Žā 2 I N = P D P ^ T + Žā ^ 2 I N D ^ = D ┬Ā Žā ^ 2 = Žā 2 P D P ~ T + Žā ^ 2 I N = 0 D ~ Žā ~ 2 D ~ = D - D ^ Žā ~ 2 = Žā 2 - Žā ^ 2 D ~ d ~ r , s P D P ~ T + Žā ~ 2 I N = 0 A = ( A 1 T , ┬Ā . . . , ┬Ā A n T ) T [ 1 2 Ōłæ i = 1 n n i ( n i + 1 ) ] ├Ś [ 1 2 k ( k + 1 ) + 1 ] X = ( d ~ 1 , 1 , ┬Ā d ~ 1 , 2 , ┬Ā . . . , ┬Ā d ~ 1 , ┬Ā k , ┬Ā d ~ 2 , 2 , ┬Ā . . . , ┬Ā d ~ k , k , ┬Ā Žā ~ 2 ) T D ~ Žā ~ 2 ( A ) = 1 2 k ( k + 1 ) + 1

Lemma 1 and Theorem 1 enable us to check whether a given model is identifiable. A toy example is provided below. Suppose there are 3 grid points that produce 2 time intervals. According to the theorem, the rank of A must be 1 2 3 ( 3 + 1 ) + 1 = 7 p i = I 3 , ┬Ā A 1 = Ōŗ» = A n ┬Ā = 1 0 0 0 0 0 1 0 1 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 1 0 0 1 0 0 0 0 1 0 0 0 0 0 0 0 1 1 X = d ~ 1 , 1 d ~ 1 , 2 d ~ 1 , 3 d ~ 2 , 2 d ~ 2 , 3 d ~ 3 , 3 Žā ~ 2 1 2 3 ( 3 + 1 ) = 6

For the random effects of the proposed Bayesian model, we employ the priors presented by Chen and Dunson [26]. Specifically, independent half normal priors are imposed on the diagonal elements of ╬ö and normal priors on the lower triangular elements of ╬©. For the fixed effects, we straightforwardly extend the priors presented in Yi et al. [17, 18].

Let wa = P(╬│a = 1) be the inclusion probability of the ath genetic effect. We assume that all inclusion probabilities are independent of each other and thus the prior of ╬│ is ŌłÅ a = 1 r ┬Ā w a ╬│ a ( 1 - w a ) 1 - ╬│ a P ( ╬╗ ) = ŌłÅ a = 1 r ┬Ā P ( ╬╗ a )

In model (2), we let the distribution of each bij independently follow a standard normal distribution. Thus, the joint prior distribution of b = ( b 1 T , ┬Ā . . . , ┬Ā b n T ) T P ( b ) ┬Ā d = ┬Ā N ( 0 , ┬Ā I n k ) P ( ╬┤ ) = ŌłÅ l = 1 k ┬Ā P ( ╬┤ l ) ┬Ā = ┬Ā ŌłÅ l = 1 k ┬Ā N + ( ╬┤ 1 | m l 0 , ┬Ā s l 0 2 ) N + ( ╬┤ 1 | m l 0 , ┬Ā s l 0 2 ) N ( ╬┤ 1 | m l 0 , ┬Ā s l 0 2 ) P ( ╬© ) ┬Ā = d ┬Ā N ( ╬© 0 , ┬Ā R 0 )

The prior for the ath genetic effect is a normal distribution, P ( ╬▓ a | ╬│ a , ┬Ā Žā ╬▓ 2 ) ┬Ā d = ┬Ā N ( 0 , ┬Ā ╬│ a Žā ╬▓ 2 ) Žā ╬▓ 2 P ( Žā ╬▓ 2 ) ┬Ā d = ┬Ā i n v - Žć 2 ( ╬Į ╬▓ , ┬Ā s ╬▓ 2 ) E ( Žā ╬▓ 2 ) ┬Ā = ╬Į ╬▓ s ╬▓ 2 ╬Į ╬▓ - 2 Žā ╬▓ 2 s ╬▓ 2 V a ╬▓ a 2 / V E ( Žā ╬▓ 2 ) ┬Ā = ┬Ā E ( ╬▓ a 2 ) s ╬▓ 2 ┬Ā = ┬Ā ( ╬Į ╬▓ - 2 ) E ( Žā ╬▓ 2 ) / ╬Į ╬▓ ┬Ā = ( ╬Į ╬▓ - 2 ) E ( h a ) V / ( ╬Į ╬▓ V a ) P ( ╬╝ ) ┬Ā d = ┬Ā N ( ╬Ę 0 , ┬Ā Žä 0 2 ) ╬Ę 0 ┬Ā = ┬Ā y ┬» ┬Ā = ┬Ā ( 1 N ) Ōłæ i = 1 n Ōłæ j = 1 n i ┬Ā y i j Žä 0 2 ┬Ā = ┬Ā s y 2 ┬Ā = ┬Ā ( 1 N - 1 ) Ōłæ i = 1 n ┬Ā Ōłæ j = 1 n i ┬Ā ( y i j - y ┬» ) 2 P ( Žā 2 ) ┬Ā d = ┬Ā i n v - Žć 2 ( ╬Į Žā , ┬Ā s Žā 2 )

The joint posterior distribution is proportional to the product of the likelihood and the prior distributions of all unknown parameters, which can be expressed as

where ╬Ė=(╬╗, ╬▓, b, ╬┤, Žł, ╬╝, Žā2)T. To obtain MCMC samples of all parameters, we utilize the Metropolis-Hastings and Gibbs sampling algorithms, and alternately update each unknown parameter or set of unknown parameters conditional on all the other parameters and the observed data.

For ╬│ and ╬╗, we use the Metropolis-Hastings algorithm within Gibbs sampler since their conditional distributions have no known distributional forms. To update those parameters, we straightforwardly extend the Metropolis-Hastings algorithm proposed by Yi et al. [18] for our Bayesian model. These algorithms are described in the Supplementary Data 1. For the other parameters, we applied the Gibbs sampling algorithm. Specifically, since b, ╬┤, and Žł have multivariate normal or half normal priors, the full conditional distributions are easy to derive by their conjugacy properties. The full conditional posterior distributions of b, ╬┤ and Žł are P ( b | y , ┬Ā ╬│ , ┬Ā ╬Ė - b ) ┬Ā d = ┬Ā N ( b * , ┬Ā Ōłæ b * ) P ( ╬┤ l | y , ┬Ā ╬│ , ┬Ā ╬Ė - ╬┤ l ) ┬Ā d = ┬Ā N + ( ╬┤ l * , ┬Ā Žā l * 2 ) P ( Žł | y , ╬│ , ╬Ė - Žł ) ┬Ā d = ┬Ā N ( Žł * , ┬Ā Ōłæ Žł * ) Ōłæ b * ╬┤ l * ╬┤ l * 2 Ōłæ Žł * Žā ╬▓ 2 P ( ╬▓ a | ╬│ a = 1 , ┬Ā ╬│ - a , ┬Ā ╬Ė - ╬▓ a , ┬Ā y ) ┬Ā d = ┬Ā N ( ╬╝ ~ a , ┬Ā Žā ~ ╬▓ 2 ) P ( Žā ╬▓ 2 | ╬▓ a ) ┬Ā d = ┬Ā I n v - Žć 2 ( ╬Į ╬▓ + 1 , ┬Ā ( ╬▓ a 2 + ╬Į ╬▓ s ╬▓ 2 ) / ( ╬Į ╬▓ + 1 ) ) P ( ╬╝ | ╬│ , ┬Ā ╬Ė - ╬╝ , ┬Ā y ) ┬Ā d = ┬Ā ( ╬╝ * , ┬Ā Žā ╬╝ 2 * ) P ( Žā 2 | ╬│ , ┬Ā ╬Ė - Žā 2 , ┬Ā y ) ┬Ā d = ┬Ā I n v - Žć 2 ( ╬Į Žā + N , ┬Ā ╬Į Žā s Žā 2 + N Žā ^ 2 ╬Į Žā + N ) ╬╝ ~ a Žā ~ ╬▓ 2 Žā ╬╝ 2 * Žā ^ 2

The posterior samples can be used to approximate the posterior distribution of the parameters. MCMC samples from the initial iterations are discarded as ŌĆ£burn-inŌĆØ and the subsequent samples are thinned by keeping every cth MCMC sample, where c is an integer, and discarding the rest. The posterior inclusion probability of each SNP can be calculated using its inclusion proportion in the MCMC samples as P ( ╬║ l | y ) ┬Ā = ┬Ā 1 T Ōłæ t = 1 T ┬Ā Ōłæ w = 1 r ┬Ā 1 ( ╬╗ w ( t ) ┬Ā = ┬Ā ╬║ l , ┬Ā ╬│ w ( t ) ┬Ā = ┬Ā 1 ) P ( ╬║ l ) ┬Ā = ┬Ā p h

The Bayes factor BF(╬║l) reflects how our belief in the importance of the lth SNP changes as we move from prior knowledge to posterior information. Jeffreys [28] and Yandell et al. [29] suggest the following criteria for judging the significance of each SNP: weak support if BF(╬║l) falls between 3 and 10; moderate support if BF(╬║l)falls between 10 and 30; and strong support if BF(╬║l) is larger than 30.

A critical issue with the proposed Bayesian model is how to choose an optimal number of grid points, k. We achieve this goal by evaluating the goodness of the predictive distributions of our Bayesian models. Spiegelhalter et al. [30] proposed the DIC as D I C ┬Ā = ┬Ā - 2 E ╬│ , ╬Ė | y [ l o g P ( y | ╬│ , ┬Ā ╬Ė ) ] ┬Ā + ┬Ā P D P D ┬Ā = ┬Ā - 2 E ╬│ , ╬Ė | y ┬Ā [ l o g ┬Ā P ( y | ╬│ , ╬Ė ) ] ┬Ā + ┬Ā 2 l o g ┬Ā P ( y | ╬│ ┬» , ┬Ā ╬Ė ┬» ) ╬│ ┬» ╬Ė ┬» P ( y i | ╬│ , ┬Ā ╬Ė ) ┬Ā d = ┬Ā N ( ╬╝ i + x i ╬ō ╬▓ , ┬Ā p i D p i T ┬Ā + ┬Ā Žā 2 ┬Ā I n i ) B P I C ┬Ā = ┬Ā - 2 E ╬│ , ╬Ė | y [ l o g P ( y | ╬│ , ╬Ė ) ] + 2 n b ^ b ^ n b ^ Ōēł P D B P I C ┬Ā = ┬Ā 2 E ╬│ , ╬Ė | y [ l o g P ( y | ╬│ , ┬Ā ╬Ė ) ] + 2 P D

The proposed grid-based Bayesian mixed models have been implemented in an R package named gridbayes [33], which is built on top of the R packages, qtl [34] and qtlbim [29]. The MCMC algorithm in C and the data manipulation procedure in R were modified for longitudinal analysis. The gridbayes package employs both DIC and simplified BPIC scores to select the optimal number of grid points. The software package and the source code are available for download at https://github.com/wonilchung/GridBayes.

To evaluate the performance of the proposed method, we conducted the following simulations. We first used 400 individuals and 1, 000 SNPs from the 1000 Genome Project data. The number of measurements for each individual ranged from 3 to 7 and the total number of observations was set to 2, 000. Six different setups (Setups 1ŌĆÆ6) were considered. We simulated the datasets containing 10 causal SNPs, which are randomly selected (i.e., the proportion of causal SNPs = 1%) with only main effects (Setup 1). For individual i, the phenotype values were generated from the model: y i ┬Ā = ┬Ā c g 1 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 10 ┬Ā x i a ┬Ā + ┬Ā t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i

To further investigate the Bayesian mixed model, we analyzed additional datasets containing two SNP-SNP interactions (Setup 2), five SNP-SNP interactions (Setup 3), two SNP-time interactions (Setup 4), five SNP-time interactions (Setup 5), or ten SNP-time interactions (Setup 6). Specifically, we simulated data according to the following models: y i ┬Ā = ┬Ā c g 2 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 6 ┬Ā x i a ┬Ā + x i 7 ┬Ā ┬Ę ┬Ā x i 8 ┬Ā + ┬Ā x i 9 ┬Ę x i 10 ┬Ā + ┬Ā t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i y i ┬Ā = ┬Ā c g 3 ┬Ā ┬Ę ( x i 1 ┬Ę x i 2 ┬Ā + ┬Ā x i 3 ┬Ę x i 4 ┬Ā + ┬Ā x i 5 ┬Ę x i 6 + ┬Ā x i 7 ┬Ę x i 8 ┬Ā + ┬Ā x i 9 ┬Ę x i 10 ┬Ā + ┬Ā t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i y i ┬Ā = ┬Ā c g 4 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 8 ┬Ā x i a ┬Ā + ┬Ā Ōłæ a = 9 10 ┬Ā x i a ┬Ę t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i y i ┬Ā = ┬Ā c g 5 ┬Ā ┬Ę ( Ōłæ a = 1 5 ┬Ā x i a ┬Ā + ┬Ā Ōłæ a = 6 10 ┬Ā x i a ┬Ę t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i y i ┬Ā = ┬Ā c g 6 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 10 ┬Ā x i a ┬Ę t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i

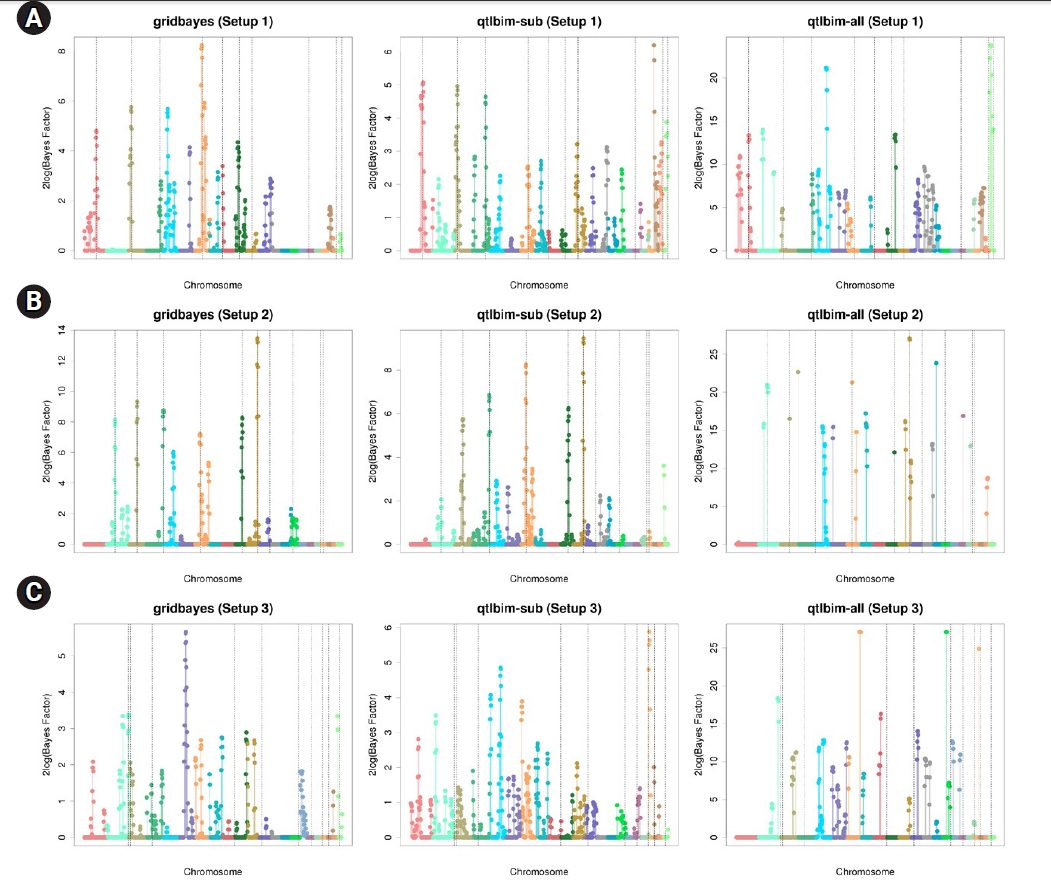

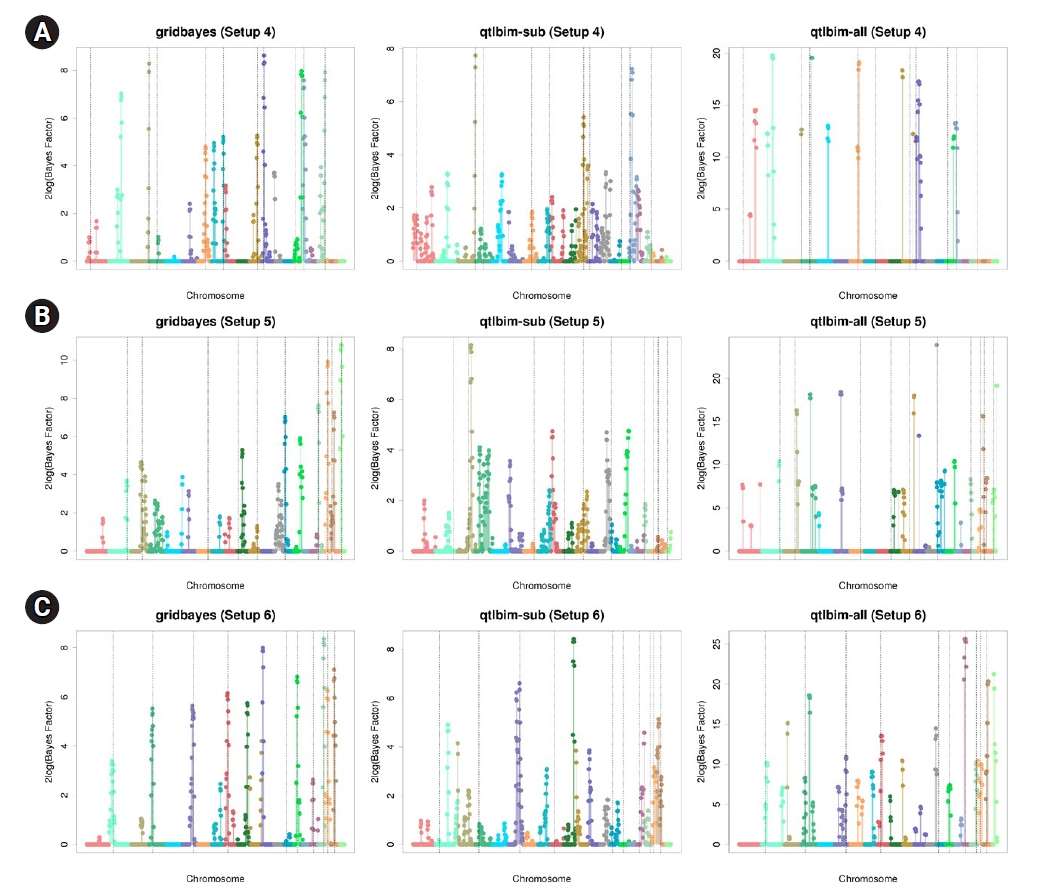

The one-dimensional genome-wide profiles of 2log(BF) for the combined main, epistatic effects, and SNP-time interactions of each SNP under the six setups were presented in Figs. 1 and 2. The dashed vertical lines indicate the locations of the 10 causal SNPs. The gridbayes analysis of all the data and qtlbim-sub detected the causal SNPs reasonably well, but gridbayes clearly outperformed qtlbim-sub in general. The qtlbim-all method occasionally identified the true causal SNPs, but it produced far more false-positive findings than gridbayes and qtlbim-sub.

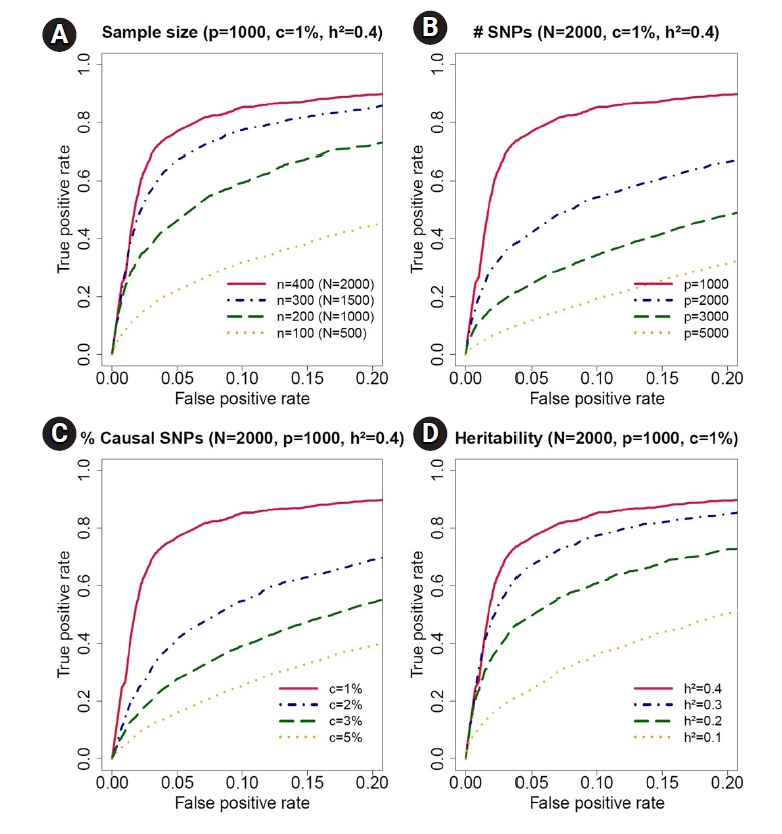

To evaluate the performance of our Bayesian model, we further calculated the receiver operating characteristic (ROC) curves. For each setup, we conducted 100 simulations. The ROC curves with a false-positive rate less than 0.2 are presented in Fig. 3. The solid lines represent the results of gridbayes, the dot-dashed lines correspond to qtlbim-sub and the results from qtlbim-all are summarized by the long-dashed lines. The ROC curves demonstrated that gridbayes with all measurements appeared to outperform the qtlbim analyses in terms of improved true positive rates.

To diagnose the convergence of the MCMC samples, we conducted 10 parallel chains with different, over-dispersed initial values with respect to the true posterior distribution. Using 104 iterations, Geweke's Z-scores [35] for each chain based on the first 10% and last 50% of the samples indicated good convergence of all parameters. Based on 10 chains, Gelman and Rubin's potential scale reduction factors [36] were calculated, and the upper limits were less than 1.01 for all parameters. Supplementary Fig. 2 presents the trace plots of Žā2, ╬┤1, ╬┤2, ╬┤3, Žł21, Žł31 and Žł32 for each setup, showing that all chains moved around the true values for all parameters, indicating good convergence. We plotted the marginal posterior and prior densities of all parameters based on 10, 000 random draws (Supplementary Fig. 3). It appeared that the random draws were approximately normal, with means close to the simulated values. Supplementary Fig. 4 displays the 95% highest posterior density (HPD) intervals for Žā2, ╬┤1, ╬┤2, ╬┤3, Žł21, Žł31 and Žł32 for each setup. Most of the 95% HPD intervals contained the corresponding true values. Table 1 summarizes the posterior estimates of all parameters. The posterior means and medians were close to the true values and all the 95% HPD intervals contained the true values, demonstrating the good performance of our algorithm.

We conducted another simulation to estimate the number of true grid points using the DIC [30] and simplified BPIC [32,37]. The settings were almost the same as those in the previous simulations, except that the true number of grid points now varied from 2 to 4 (i.e., true k=2, 3, 4). We simulated 100 datasets with 400 individuals and 1, 000 SNPs containing 10 causal SNPs (i.e., the proportion of causal SNPs = 1%) with only main effects. The causal SNPs were randomly assigned. The trait-heritability was set to 40%. The phenotype values were generated from the model: y i ┬Ā = ┬Ā c g 1 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 10 ┬Ā x i a ┬Ę t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i

For a more detailed evaluation of our Bayesian method, we conducted the following simulations with 100 replications for each scenario. We first considered 400 individuals with three to seven time points, resulting in 2,000 observations, and decreased the sample size from 400 to 100 to assess the effect of sample size in ROC curves (Fig. 4A). The simulation data contained 1,000 SNPs with 1% causal SNPs (i.e., 10 causal SNPs) with only main effects. The trait values were generated from the model: y i ┬Ā = ┬Ā c g 1 ┬Ā ┬Ę ┬Ā ( Ōłæ a = 1 10 ┬Ā x i a ┬Ę t i ) ┬Ā + ┬Ā p i v i ┬Ā + ┬Ā e i

We developed a grid-based Bayesian mixed model for longitudinal genetic data with a built-in variable selection feature. The proposed Bayesian method modeled multiple candidate SNPs simultaneously and allowed SNP-SNP and SNP-time interactions, which enabled us to identify SNPs with time-varying effects. Such SNPs are of great scientific and medical interest. In addition, we proposed a new grid-based method to model the covariance structure nonparametrically. Not only is the proposed method parsimonious in estimating the covariance matrix, but also by employing a reasonable number of grid-points, it can flexibly approximate any type of covariance structure. The number of grid points was pre-set, but DIC and simplified BPIC can be used to select the optimal number.

The simulation studies showed that the proposed Bayesian method using all time points outperformed the ordinary Bayesian method with one or all time points included. As expected, the proposed method that utilized the full data was more powerful than the corresponding univariate analysis method that only used a subset of the data. Furthermore, the proposed Bayesian method performed better than the ordinary Bayesian method because our method modeled the within-subject correlation. Further simulation studies showed that statistical power increased as the data had more samples, a smaller number of SNPs, a lower proportion of causal SNPs, and larger trait-heritability. For our simulation studies, we utilized data from the 1000 Genome Project. With only 400 independent samples of EAS ancestry, we restricted out analysis with up to 5, 000 SNPs. With a sufficient sample size, our method can be applied to all available SNPs. We are currently developing a parallel computing algorithm based on the message passing interface to execute multiple groups of SNPs simultaneously. This will make it feasible to apply our method to large-sample GWAS data.

Another important issue to mention is Bayesian model identifiability. In the Bayesian community, there is a wide diversity of views on the identifiability issue. Lindley [38] remarked that non-identifiability causes no real difficulty in Bayesian approaches. Poirier [39] and Eberly and Carlin [40] argued that a Bayesian analysis of a non-identifiable model is always possible if priors on all of the parameters are proper, since proper priors yield proper posterior distributions, and hence every parameter can be well-estimated. However, if the priors imposed on any non-identifiable model are not proper, or too close to being improper, ill-behaved posterior distributions may be generated such that the trajectory of the parameters can drift to extreme values, as demonstrated by Gelfand and Sahu [41]. In this paper, we investigated the identifiability of our Bayesian model, which motivated us to utilize only proper priors (see the Methods section). Non-identifiability occurred when the number of the grid points equaled the number of observed time points (see Supplementary Data 1), but we found that the posterior distribution behaved well due to the proper priors employed.

Notes

Acknowledgments

This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (2020R1C1C1A01012657) and Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (2021R1A6A1A10044154). This work was supported by the Soongsil University Research Fund.

Supplementary Materials

Supplementary data can be found with this article online at http://www.genominfo.org.

Supplementary┬ĀFig.┬Ā1.

Time-dependent curves of averaged phenotype values for three different genotypes (0, 1, 2) at 1st causal single nucleotide polymorphism (SNP) with no SNP-time interaction and 10th causal SNP with SNP-time interaction for Setups 4 and 5.

Supplementary┬ĀFig.┬Ā2.

Trace plots of Žā2, ╬┤1, ╬┤2, ╬┤3, Žł21, Žł31 and Žł22 for Setups 1ŌĆÆ6 in the simulation study.The black lines represent the values of the draws for all parameters at each iteration and gray lines represent the true values of the parameters.

Supplementary┬ĀFig.┬Ā3.

Posterior (solid line) and prior (dashed line) densities of the parameters for random errors and random effects for Setups 1ŌĆÆ6. Estimated densities are based on 10, 000 random draws.

Supplementary┬ĀFig.┬Ā4.

95% highest posterior density (HPD) intervals for Žā2, ╬┤1, ╬┤2, ╬┤3, Žł21, Žł31 and Žł22 for Setups 1ŌĆÆ6. The blue lines represent the 95% HPD intervals (100 replicates).

Supplementary┬ĀTable┬Ā1.

Posterior means, medians, standard deviations and 95% HPD intervals of the parameters for random errors and random effects in the simulation study for sample size

Supplementary┬ĀTable┬Ā2.

Posterior means, medians, standard deviations, and 95% HPD intervals of the parameters for random errors and random effects in the simulation study for number of SNPs

Supplementary┬ĀTable┬Ā3.

Posterior means, medians, standard deviations, and 95% HPD intervals of the parameters for random errors and random effects in the simulation study for proportion of causal SNPs

Supplementary┬ĀTable┬Ā4.

Posterior means, medians, standard deviations, and 95% HPD intervals of the parameters for random errors and random effects in the simulation study for heritability

Supplementary┬ĀTable┬Ā5.

Average DIC scores and simplified BPIC scores over 100 replications in the simulation study for sample size, number of SNPs, proportion of causal SNPs and heritability

Fig.┬Ā1.

Genome-wide profiles of 2log(BF) for all combined effects using gridbayes with all time points, qtlbim with one randomly-selected time point (qtlbim-sub) and qtlbim with all time points (qtlbim-all) for Setups 1 (A), 2 (B), and 3 (C).

Fig.┬Ā2.

Genome-wide profiles of 2log(BF) for all combined effects using gridbayes with all time points, qtlbim with one randomly-selected time point (qtlbim-sub) and qtlbim with all time points (qtlbim-all) for Setups 4 (A), 5 (B), and 6 (C).

Fig.┬Ā3.

Receiving operating characteristic curve analyses in the simulation study for Setup 1 (A), 2 (B), 3 (C), 4 (D), 5 (E) and 6 (F). The red solid lines represent the results of gridbayes, the blue dot-dashed lines correspond to qtlbim-sub and the green long-dashed lines display the results from qtlbim-all. gridbayes: grid-based Bayesian mixed models with all the data; qtlbim-sub qtlbim with a subset of each simulated data where only one measurement from each subject was randomly selected; qtlbim-all: qtlbim with all the data by (incorrectly) assuming that all the measurements were independent. G, gene; T, time.

Fig.┬Ā4.

Receiving operating characteristic curve analyses in the simulation study for sample size, number of single-nucleotide polymorphisms (SNPs), proportion of causal SNPs, and heritability. (A) We decreased the sample size from n = 400 (total number of observations, N = 2, 000) to n = 100 (N = 1, 000) for accessing the effect of sample size in receiver operating characteristic curves. The simulation data contained p = 1, 000 SNPs, c = 1% causal SNPs and h2 = 40% trait-heritability. (B) We increased the number of SNPs from p = 1, 000 to p = 5, 000 to evaluate the effect of number of SNPs. The simulation data contained N = 2, 000 observations, c = 1% causal SNPs, and h2 = 40% trait-heritability. (C) We increased the proportion of causal SNPs from c = 1% to 5%. The simulation data contained N = 2, 000 observations, p = 1, 000 SNPs, and h2 = 40% trait-heritability. (D) We decreased the trait-heritability from h2 = 40% to h2 = 10%. The simulation data contained N = 2, 000 observations, p = 1, 000 SNPs, and c = 1% causal SNPs.

Table┬Ā1.

Posterior means, medians, standard deviations, and 95% HPD intervals of the parameters for random errors and random effects in the simulation study

Table┬Ā2.

Average DIC scores and simplified BPIC scores over 100 replications and the proportion selecting the model with the correct number of grid points using the proposed Bayesian model

DIC, deviance information criterion; BPIC, Bayesian predictive information criterion; Avg DIC, average deviance information criterion scores over 100 replications; #Sel (%), proportion selecting the model with the correct number of grid points; Avg Sim BPIC, average simplified Bayesian predictive information criterion scores over 100 replications; Avg PD, average PD.

Table┬Ā3.

Simulation settings for all simulation setups in the Results section based on genetic effect terms, the number of grid points (k), sample size (n), number of observations (N), number of SNPs (p), number of causal SNPs (c), and trait-heritability (h2)

References

1. Sabatti C, Service SK, Hartikainen AL, Pouta A, Ripatti S, Brodsky J, et al. Genome-wide association analysis of metabolic traits in a birth cohort from a founder population. Nat Genet 2009;41:35ŌĆō46.

2. Mei H, Chen W, Jiang F, He J, Srinivasan S, Smith EN, et al. Longitudinal replication studies of GWAS risk SNPs influencing body mass index over the course of childhood and adulthood. PLoS One 2012;7:e31470.

3. Furlotte NA, Eskin E, Eyheramendy S. Genome-wide association mapping with longitudinal data. Genet Epidemiol 2012;36:463ŌĆō471.

4. Das K, Li J, Fu G, Wang Z, Li R, Wu R. Dynamic semiparametric Bayesian models for genetic mapping of complex trait with irregular longitudinal data. Stat Med 2013;32:509ŌĆō523.

5. Couto Alves A, De Silva NM, Karhunen V, Sovio U, Das S, Taal HR, et al. GWAS on longitudinal growth traits reveals different genetic factors influencing infant, child, and adult BMI. Sci Adv 2019;5:eaaw3095.

6. Gouveia MH, Bentley AR, Leonard H, Meeks KA, Ekoru K, Chen G, et al. Trans-ethnic meta-analysis identifies new loci associated with longitudinal blood pressure traits. Sci Rep 2021;11:4075.

7. Chung W. Statistical models and computational tools for predicting complex traits and diseases. Genomics Inform 2021;19:e36.

8. Manolio TA, Collins FS, Cox NJ, Goldstein DB, Hindorff LA, Hunter DJ, et al. Finding the missing heritability of complex diseases. Nature 2009;461:747ŌĆō753.

9. Visscher PM, Brown MA, McCarthy MI, Yang J. Five years of GWAS discovery. Am J Hum Genet 2012;90:7ŌĆō24.

10. Chung W, Chen J, Turman C, Lindstrom S, Zhu Z, Loh PR, et al. Efficient cross-trait penalized regression increases prediction accuracy in large cohorts using secondary phenotypes. Nat Commun 2019;10:569.

12. Smith EN, Chen W, Kahonen M, Kettunen J, Lehtimaki T, Peltonen L, et al. Longitudinal genome-wide association of cardiovascular disease risk factors in the Bogalusa heart study. PLoS Genet 2010;6:e1001094.

13. Satagopan JM, Yandell BS, Newton MA, Osborn TC. A Bayesian approach to detect quantitative trait loci using Markov chain Monte Carlo. Genetics 1996;144:805ŌĆō816.

14. Yi N, Xu S. Mapping quantitative trait loci with epistatic effects. Genet Res 2002;79:185ŌĆō198.

15. Yi N, George V, Allison DB. Stochastic search variable selection for identifying multiple quantitative trait loci. Genetics 2003;164:1129ŌĆō1138.

16. Yi N. A unified Markov chain Monte Carlo framework for mapping multiple quantitative trait loci. Genetics 2004;167:967ŌĆō975.

17. Yi N, Yandell BS, Churchill GA, Allison DB, Eisen EJ, Pomp D. Bayesian model selection for genome-wide epistatic quantitative trait loci analysis. Genetics 2005;170:1333ŌĆō1344.

18. Yi N, Shriner D, Banerjee S, Mehta T, Pomp D, Yandell BS. An efficient Bayesian model selection approach for interacting quantitative trait loci models with many effects. Genetics 2007;176:1865ŌĆō1877.

19. Banerjee S, Yandell BS, Yi N. Bayesian quantitative trait loci mapping for multiple traits. Genetics 2008;179:2275ŌĆō2289.

20. Wu WR, Li WM, Tang DZ, Lu HR, Worland AJ. Time-related mapping of quantitative trait loci underlying tiller number in rice. Genetics 1999;151:297ŌĆō303.

21. Wu W, Zhou Y, Li W, Mao D, Chen Q. Mapping of quantitative trait loci based on growth models. Theor Appl Genet 2002;105:1043ŌĆō1049.

22. Ma CX, Casella G, Wu R. Functional mapping of quantitative trait loci underlying the character process: a theoretical framework. Genetics 2002;161:1751ŌĆō1762.

23. Yap JS, Fan J, Wu R. Nonparametric modeling of longitudinal covariance structure in functional mapping of quantitative trait loci. Biometrics 2009;65:1068ŌĆō1077.

24. Yang R, Tian Q, Xu S. Mapping quantitative trait loci for longitudinal traits in line crosses. Genetics 2006;173:2339ŌĆō2356.

25. Chung W, Zou F. Mixed-effects models for GAW18 longitudinal blood pressure data. BMC Proc 2014;8(Suppl 1):S87.

26. Chen Z, Dunson DB. Random effects selection in linear mixed models. Biometrics 2003;59:762ŌĆō769.

27. Lehmann EL, Casella G. Theory of Point Estimation. New York: Springer, 2006.

28. Jeffreys H. Theory of Probability. 3rd ed. Oxford: Clarendon, 1961.

29. Yandell BS, Mehta T, Banerjee S, Shriner D, Venkataraman R, Moon JY, et al. R/qtlbim: QTL with Bayesian Interval Mapping in experimental crosses. Bioinformatics 2007;23:641ŌĆō643.

30. Spiegelhalter DJ, Best NG, Carlin BP, Van Der Linde A. Bayesian measures of model complexity and fit. J R Stat Soc Series B Stat Methodol 2002;64:583ŌĆō639.

31. Robert CP, Titterington DM. Discussion of a paper by D. J. Spiegelhalter et al. J. R. Stat. Soc. Ser. B 2002;64:621ŌĆō622.

32. Ando T. Bayesian predictive information criterion for the evaluation of hierarchical Bayesian and empirical Bayes models. Biometrika 2007;94:443ŌĆō458.

33. Bayesian Parametric and Nonparametric Methods for Multiple QTL Mapping and SNP-Set Analysis. Chapel Hill: University of North Carolina at Chapel Hill, 2013.

34. Broman KW, Wu H, Sen S, Churchill GA. R/qtl: QTL mapping in experimental crosses. Bioinformatics 2003;19:889ŌĆō890.

35. Geweke JF. Evaluating the accuracy of sampling-based approaches to the calculation of posterior moments. Minneapolis: Federal Reserve Bank of Minneapolis, 1991.

36. Gelman A, Rubin DB. Inference from iterative simulation using multiple sequences. Stat Sci 1992;7:457ŌĆō472.

38. Lindley DV. Bayesian Statistics: A Review. Philadelphia: Society for Industrial and Applied Mathematics, 1972.

- TOOLS

-

METRICS

-

- 1 Crossref

- 0 Scopus

- 4,685 View

- 158 Download

- Related articles in GNI